B2B Sales

Ultimate Guide to Ethical AI Revenue Forecasting

Apr 2, 2026

Ethical AI forecasting prevents bias, protects data, and restores trust in revenue predictions.

AI revenue forecasting can improve accuracy by 20–50%, but ethical risks like bias, transparency issues, and data privacy concerns must be addressed. Without these safeguards, forecasts may mislead decision-making, skew quotas, and erode trust.

Here’s what you need to know:

Bias Risks: Historical, selection, confirmation, and aggregation biases can distort predictions and misallocate resources.

Transparency Challenges: AI’s “black box” nature makes it hard to understand and trust predictions, especially for high-stakes decisions.

Data Privacy: AI relies on massive datasets, increasing risks of misuse, breaches, and regulatory non-compliance.

Accountability Gaps: Without clear oversight, errors persist, and it’s unclear who’s responsible for fixing them.

How to Mitigate These Risks:

Audit and diversify training data to reduce bias.

Use Explainable AI (XAI) tools like SHAP or LIME to clarify predictions.

Establish strong data governance and consent protocols.

Ensure regular human oversight and feedback loops.

Cindy Gordon: AI Sales Forecasting & Responsible AI

Ethical Risks in AI Revenue Forecasting

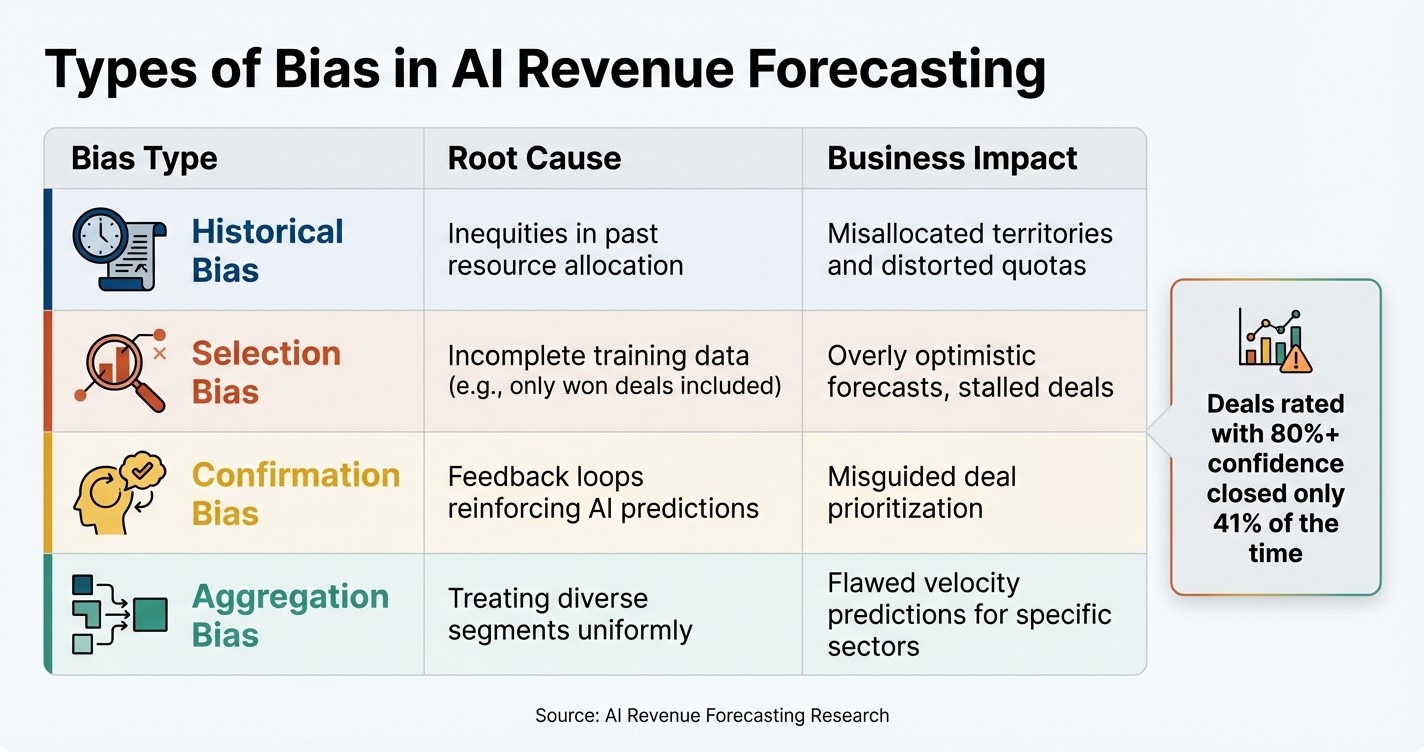

Four Types of AI Forecasting Bias: Causes and Business Impact

AI revenue forecasting offers the potential for more precise predictions, but it also introduces ethical challenges that can erode trust, misdirect resources, and skew quotas [1]. Recognizing these risks is a crucial step in creating systems that are both effective and fair.

Bias in Predictions

Bias is one of the most pressing ethical concerns in AI forecasting. It often stems from models trained on flawed or incomplete data, leading to predictions that unfairly favor certain deals, segments, or sales representatives while undervaluing others.

Historical bias happens when AI learns from past resource allocation patterns rather than the actual potential of opportunities. For instance, if large enterprise deals historically received more attention and resources, the AI might mistakenly conclude that these deals are inherently superior, sidelining smaller opportunities [1].

Selection bias arises when training data excludes critical information. Models trained only on "closed-won" deals, for example, ignore stalled or lost deals, resulting in overly optimistic pipeline predictions [1].

Confirmation bias and feedback loops create self-reinforcing cycles. When AI assigns a high score to a deal, sales teams may prioritize it, investing more time and resources. This increased attention can influence the outcome, falsely validating the AI's prediction. In one study, deals rated by representatives with 80%+ confidence closed only 41% of the time, revealing a significant "optimism tax" [2].

Aggregation bias occurs when diverse segments are treated as if they are identical. For example, using a single 90-day sales cycle forecast for industries like healthcare (which might require 120 days) and tech (which averages 60 days) can lead to systemic errors [1].

Bias Type | Root Cause | Business Impact |

|---|---|---|

Historical | Inequities in past resource allocation | Misallocated territories and distorted quotas [1] |

Selection | Incomplete training data (e.g., won deals) | Overly optimistic forecasts, stalled deals [1] |

Confirmation | Feedback loops reinforcing predictions | Misguided deal prioritization [1] |

Aggregation | Treating diverse segments uniformly | Flawed velocity predictions for specific sectors [1] |

Lack of Transparency and Explainability

AI models often operate as "black boxes", producing forecasts without clear explanations. This lack of transparency can undermine trust. For example, if a manager overrides a sales rep's forecast based on an opaque AI recommendation, the team may lose confidence in the tool [4][5]. Sales professionals need to understand the reasoning behind predictions - not just to trust the numbers but also to identify and correct potential errors.

Explainability becomes even more crucial for high-stakes decisions like territory reassignments, quota changes, or resource planning. Tools that provide clear reasoning, such as flagging risks like "No competitor mentioned", empower teams to validate AI outputs and address errors before they escalate [1][4].

"The best forecasts happen when humans and machines challenge each other." - Priya [5]

Opacity also complicates accountability. Without understanding how an AI reached its conclusions, diagnosing mistakes and implementing fixes becomes nearly impossible. Moreover, the vast amounts of data these systems rely on introduce serious privacy concerns.

Data Privacy Risks

AI forecasting systems require massive datasets, often pulling from sources like call transcripts, email sentiment, calendar activity, and buyer engagement patterns. This reliance on extensive data increases the risk of exposing sensitive information or misusing it [8].

Data repurposing is a key concern. Information collected for one purpose, such as closing a sale, might later be used to train AI models without explicit consent [6][8]. AI can even infer sensitive details, like political views or health conditions, from seemingly harmless data points [7].

"AI systems are so data-hungry and intransparent that we have even less control over what information about us is collected, what it is used for, and how we might correct or remove such personal information." - Jennifer King, Privacy and Data Policy Fellow, Stanford HAI [6]

Efforts to anonymize data often fall short because AI can link disparate datasets, making true anonymization nearly impossible [7]. Risks like data breaches or prompt injection attacks further underscore the need for strong data governance. With 80% of businesses facing cybercrime due to poor data handling, the importance of robust safeguards and compliance with growing regulatory demands cannot be overstated [9][5].

Accountability Gaps

When AI systems make inaccurate or unethical predictions, assigning responsibility can become murky. Is the fault with the data scientist who built the model? The sales operations team that deployed it? The sales reps providing input data? Or the executives acting on the forecasts? This lack of clarity allows errors to persist unchecked.

The stakes are especially high for decisions tied to revenue and the future of sales enablement. For instance, a 10% forecasting error can cost a $50M company about $1.2M [2]. Without clear accountability, there’s little motivation to audit models, question assumptions, or recalibrate predictions.

Human oversight is critical yet often lacking. Sales reps, already spending 40–60% of their time on administrative tasks [3], may not have the capacity to validate AI recommendations. Meanwhile, data science teams may lack the industry-specific knowledge to identify when forecasts fail to align with reality. Regular reviews comparing AI predictions to actual outcomes, combined with expert validation for crucial decisions, are essential to ensuring that human judgment complements machine intelligence rather than being sidelined [1].

How to Reduce Ethical Risks

Tackling issues like bias, lack of transparency, privacy concerns, and accountability gaps requires specific, actionable steps. By addressing these areas, organizations can improve forecast accuracy by 20% to 50% while fostering trust within their teams [1].

Improving Data Quality and Diversity

The backbone of ethical AI forecasting lies in using fair and representative data. Begin by auditing your training data to identify and correct historical inequities. For example, if enterprise deals have historically been prioritized over SMB opportunities, your AI might incorrectly assume enterprise deals are naturally more lucrative rather than recognizing the disparity in attention they’ve received [1].

To minimize bias, expand your data sources beyond CRM fields. Include activity data, relationship intelligence, and engagement metrics to reduce dependence on any single, potentially biased source [1]. AI tools can also help by collecting objective customer interaction data, which reduces manual entry errors and optimism bias [12].

Another useful approach is to segment forecasts by region, product line, or sales motion and measure bias by calculating the difference between forecasted and actual values. This helps identify systematic errors. Regularly - ideally quarterly - compare AI predictions to actual outcomes, adjusting model weightings as needed [1][12][13].

Implementing Explainable AI (XAI)

Transparency is key to building trust in AI systems. Explainable AI (XAI) tools clarify why a prediction was made, helping teams determine whether the reasoning is based on real predictive power or rooted in historical bias [1].

Frameworks like LIME (Local Interpretable Model-Agnostic Explanations) and SHAP (SHapley Additive exPlanations) can break down individual predictions and highlight which factors - like deal size, engagement levels, or past win rates - are influencing probability scores.

"We started playing with connecting that to then the OpenAI API and being able to start doing things like coding the notes that reps were adding to kind of say, is this positive, neutral or negative? And then you can start also then collecting data on that and over time saying like, oh, let me actually normalize it based on recognizing some reps are more pessimistic or some are more optimistic and you can actually start to really play around." - Rachel Krall, The Go-to-Market Podcast [1]

AI can also help code qualitative notes from sales reps, identifying patterns of optimism or pessimism in their inputs. By normalizing these tendencies, forecast predictions can better reflect actual trends rather than individual biases [1].

Once these explainability tools are in place, it’s crucial to ensure robust data governance to protect sensitive customer data and maintain compliance.

Establishing Data Governance and Consent Protocols

Strong data governance is essential for meeting privacy standards and complying with regulations like GDPR, CCPA, the EU AI Act, and the NIST AI Risk Management Framework [11][14][17]. Use encryption, anonymization, and clear opt-in/opt-out policies to safeguard data [10][14][15][16].

When working with vendors, conduct thorough assessments to ensure they meet your encryption and data storage standards. Limit third-party access to sensitive information [15]. Additionally, verify the origin of AI developers and consult resources like the FBI or State Department to evaluate cybersecurity risks tied to certain regions [10]. Avoid free generative AI tools for sensitive forecasting tasks, as these platforms often use input data to train their models, which could compromise privacy [10].

A recent example highlights the risks: In March 2024, the SEC fined two investment advisory firms, Delphia (USA) Inc. and Global Predictions Inc., $400,000 for making misleading claims about their use of AI. This underscores the importance of maintaining transparency and compliance [10].

Adding Human Oversight and Feedback Loops

Even with improved data practices and transparent AI models, human oversight remains critical to ethical forecasting. Regular managerial reviews of AI outputs should be mandatory, and companies should establish an AI advisory board to track and address recurring errors [11][15][17].

"It's important to keep human oversight front and center. It's part of maintaining transparency, which is a key component for building trust in AI systems." - Kapish Vanvaria, EY Global and Americas Risk Consulting Leader, EY [17]

Involve a diverse group of stakeholders - spanning legal, ethics, technology, and business operations - to identify blind spots in AI models before they lead to major decisions [11]. For instance, require a review of AI-generated forecasts before implementing changes to territory designs or sales quotas [15][17].

To further refine predictions, use time decay methods by adjusting deal probabilities based on how long an opportunity has stalled in a particular stage [13]. Standardize terminology across departments - ensuring terms like "Commit", "Best Case", and "Pipeline" mean the same thing everywhere - to prevent data silos and inconsistencies [13].

Implementation Steps for Ethical AI Forecasting

Ethical AI forecasting requires a structured approach to ensure accuracy and trust. Typically, an AI ethics audit for a mid-sized project takes 2–4 weeks, but the benefits are clear. By following these steps, organizations can cut forecast errors by 20% to 50% while strengthening team confidence [18][1].

Conducting Data Audits and Model Validation

The first step is defining the scope of your audit. Identify the AI models, data flows, and stakeholders involved, with a focus on ethical concerns like fairness, privacy, and transparency [18]. Don't limit this process to technical teams. Instead, bring together a diverse group - including AI engineers, legal experts, ethicists, and external reviewers - to challenge assumptions and ensure a broader perspective [18].

Next, examine your training data for imbalances across areas such as region, product line, or deal size. This ensures the audit addresses allocation-driven biases rather than simply mirroring existing disparities [1].

To measure fairness, use group fairness metrics like demographic parity or equal opportunity. Tools like Fairlearn, IBM AI Fairness 360, or Amazon SageMaker Clarify can help quantify disparities in predictions. Additionally, implement explainability tools such as SHAP or LIME to clarify which factors - like deal size, engagement levels, or past win rates - are influencing forecast outcomes [18][1].

Once you've identified issues through the audit, integrate these ethical insights into your operational processes to make them actionable.

Integrating Ethical Checks into Workflows

After validating your models, embed ethical checks into your workflows. Add metrics like fairness scores and bias indicators to your model monitoring dashboards, right alongside standard KPIs like accuracy and uptime [18]. This ensures ethical performance is monitored with the same diligence as technical performance.

Introduce human review at critical decision points. For example, before implementing changes to sales territories or quotas, have experienced team members review AI-generated forecasts. They can flag outputs that don't align with on-the-ground realities, such as assigning a high probability to a deal that's been stalled for weeks [1].

Take inspiration from Qualtrics, which in 2025 centralized its go-to-market planning using the Fullcast platform. By replacing disconnected spreadsheets with a unified "Revenue Command Center", they improved data integrity and reduced hidden biases in their revenue processes [1]. Additionally, establish a quarterly review cycle to compare AI predictions with actual outcomes. This helps detect "drift" - when new biases emerge over time - and recalibrate your models as needed [1][18].

Comparing Ethical Strategies

Building on audits and integrated checkpoints, organizations can refine forecasting with targeted ethical strategies. Each approach serves a specific purpose, and combining them can create a robust framework tailored to your needs.

Ethical Strategy | Primary Goal | Key Action | Best For |

|---|---|---|---|

Diverse Data Collection | Reduce Selection Bias | Include activity, relationship, and engagement signals beyond CRM data [1]. | Organizations with legacy data gaps or new market segments. |

Human Oversight | Prevent Feedback Loops | Validate forecasts before high-stakes resource allocation decisions [1]. | High-stakes resource allocation and territory planning. |

Bias Detection Tools | Quantitative Validation | Use Fairlearn or AIF360 for demographic parity and equal opportunity metrics [18]. | Compliance-heavy industries like finance and healthcare. |

Transparency/XAI | Build Trust & Accountability | Use SHAP or LIME to explain factors driving forecast outcomes [18][1]. | Complex B2B sales where teams need to justify projections. |

Continuous Monitoring | Long-term Calibration | Regularly compare AI predictions with actual outcomes to detect drift [1][18]. | All organizations using AI forecasting. |

Combining strategies often yields the best results. For instance, pairing diverse data collection with continuous monitoring creates a feedback loop for ongoing improvement. Adding transparency tools further ensures that teams not only act on forecasts but also trust the reasoning behind them.

Coach Pilot's Role in Ethical Revenue Forecasting

Coach Pilot takes the challenges of AI revenue forecasting head-on by offering a systematic and transparent approach that eliminates guesswork. One of the key issues it tackles is "Hope-Based Forecasting", where manual CRM updates - often done in haste - can skew data and introduce bias. Instead, Coach Pilot ensures real-time capture of accurate deal data, aligning with the goal of creating ethical and transparent forecasts[19].

AI-Driven Coaching for Fair and Accurate Predictions

By embedding AI coaching directly into workflows - such as calls, emails, and meetings - Coach Pilot reduces reliance on manual data entry, which often introduces errors or bias. This real-time data capture leads to more reliable and fair forecasts. The impact? Customers have reported impressive results: 7.8 times pipeline growth in less than 90 days, a 39% increase in quota attainment, and an average of 19.5 hours saved per sales rep each week[20].

"That's our mission: to make every rep sell like your best rep." [19]

Custom Playbooks and Analytics to Minimize Bias

Coach Pilot adapts to each company’s unique strategies by training on their successful playbooks, messaging, and deal stages[27,29]. It transforms these strategies into a dynamic "Living Playbook", offering consistent, actionable guidance to every sales rep. This structured approach not only reduces performance gaps but also strengthens ethical forecasting by providing clear "next best step" recommendations during live deals[19].

Integrating Ethical Practices Into Workflows

The platform goes a step further by embedding these tailored playbooks into secure workflows, adhering to SOC2 and ISO27001 standards for data privacy and compliance[20]. Coach Pilot also includes a bias auditing framework, achieving a minimal false positive rate disparity of just 0.027[21]. By flagging missing deal elements in real time, it ensures accountability and objectivity in forecasts.

"Revenue is predictable because execution is consistent." [19]

With only 45% of sales leaders expressing confidence in their forecasts[13], Coach Pilot’s integration of ethical checks directly into everyday workflows helps build fairness and transparency into every prediction.

Key Takeaways for Ethical AI Revenue Forecasting

Using ethical AI practices can significantly boost forecast accuracy. Studies show that AI-powered forecasting can cut errors by 20–50% compared to traditional methods [1]. But to achieve these results, organizations must tackle systematic bias, emphasize explainability, and ensure human oversight throughout the process. Without these measures, AI forecasting tends to skew predictions in one direction, and these inaccuracies can grow over time [1].

To address these challenges, companies should focus on several key actions: auditing training data, expanding data sources beyond CRM systems, implementing Explainable AI (XAI), and involving humans in critical decision-making [1]. These steps help mitigate ethical risks and improve forecasting outcomes.

"The best forecasts happen when humans and machines challenge each other" [5]

The potential benefits are enormous. Sales leaders dealing with accuracy issues can see forecast reliability reach 90% or higher when combining AI with human expertise [5]. This level of precision supports better resource planning, transparent compensation models, and smarter investment strategies.

Regulatory requirements add another layer of urgency. Policies like the EU AI Act (2024–2025) and U.S. FTC guidelines demand transparency and controls to manage bias [5]. To comply, businesses must create accountability systems and implement ongoing monitoring to ensure ethical practices.

Coach Pilot offers tools to meet these demands by embedding ethical AI directly into sales processes. The platform combines tailored sales playbooks, hands-on training, and AI-driven coaching to reduce errors and ensure forecasts are grounded in reliable data and human oversight. This approach delivers consistent, ethical revenue predictions.

FAQs

How do I spot bias in my revenue forecasts?

To spot bias in revenue forecasts, keep an eye out for systematic errors. These might include repeated overestimations or underestimations, or an uneven emphasis on certain segments or deal types without clear business justification. Compare forecast patterns with actual results over time to uncover trends, such as optimism bias. It's also crucial to routinely review the data inputs and assumptions behind your forecasting models. This habit helps identify and correct biases early, leading to more reliable predictions.

What should an AI forecasting audit include?

An AI forecasting audit plays a crucial role in evaluating the accuracy, reliability, and potential biases of AI models used for revenue predictions. Here's what such an audit should focus on:

Identifying Forecasting Bias: Check if the model consistently overestimates or underestimates outcomes. This helps pinpoint any systematic errors in predictions.

Ensuring Data Quality: High-quality, relevant data is essential for trustworthy forecasts. The audit should verify that the input data is clean, complete, and representative.

Verifying Assumptions: Scrutinize the assumptions built into the model to ensure they align with real-world conditions and logical reasoning.

Performance Evaluation: Compare the model’s predictions against industry benchmarks and historical data to determine its effectiveness.

Reviewing Model Transparency: Ensure that stakeholders can understand how the forecasts are generated. Clear explanations build trust and allow for better decision-making.

By addressing these areas, the audit not only enhances prediction accuracy but also ensures ethical practices are upheld.

How can we use customer data safely for forecasting?

To handle customer data responsibly for forecasting, it's crucial to adopt ethical practices and strong data management strategies. Start by collecting data in a way that avoids any form of bias or discrimination. Be transparent about how the data will be used, and always adhere to privacy laws like GDPR or CCPA.

Some critical steps include:

Securing data: Implement measures to protect data from breaches or unauthorized access.

Anonymizing sensitive information: Strip away personal identifiers to safeguard individual privacy.

Maintaining data accuracy: Use proper data hygiene practices to ensure the information is reliable and up-to-date.

This careful approach not only protects individual rights but also fosters trust and ensures your AI-driven forecasts are both fair and dependable.

Related Blog Posts

Remove the guesswork from winning more deals.